Documentation Index

Fetch the complete documentation index at: https://docs.openreward.ai/llms.txt

Use this file to discover all available pages before exploring further.

Goals

- Set up distributed RL training with Tinker

- Configure an OpenReward environment for training

- Monitor training progress with WandB

- Train a model on the WhoDunIt environment

Prerequisites

- A Tinker account and API key

- An OpenReward account and API key

- A WandB account and API key

- Python 3.11+

Setup

Tinker is a flexible API for efficiently fine-tuning open source models with LoRA. In this tutorial, we’ll use it to train a language model on an OpenReward environment using reinforcement learning. First, clone the OpenReward cookbook repository and navigate to the Tinker training example:.env file with your API credentials:

Understanding the Training Pipeline

The training pipeline combines three services:- Tinker provides the distributed compute infrastructure for running training

- OpenReward provides the environments and tasks for the agent to learn from

- WandB tracks metrics, logs, and training progress

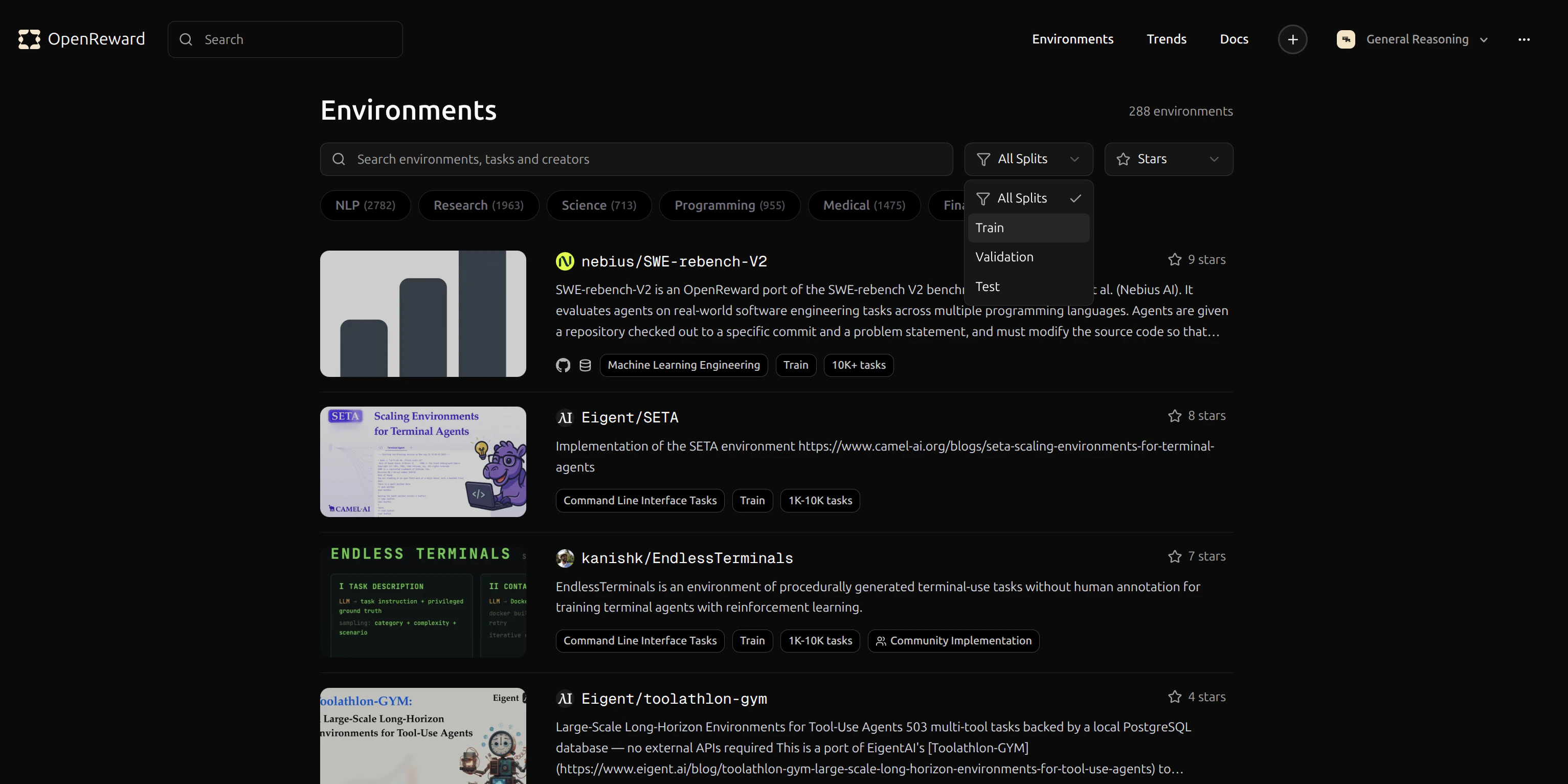

Selecting an Environment

Browse available environments at OpenReward:

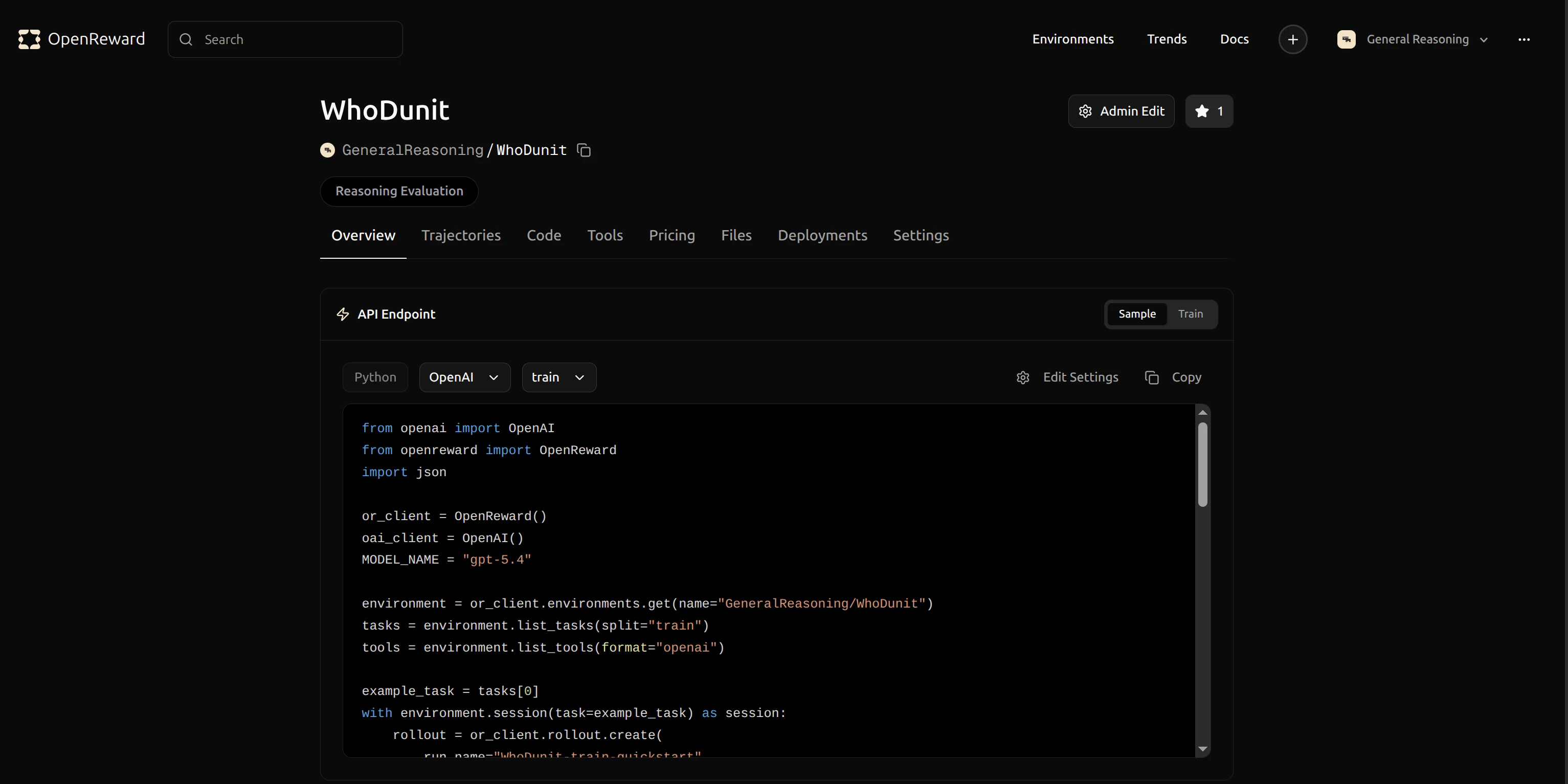

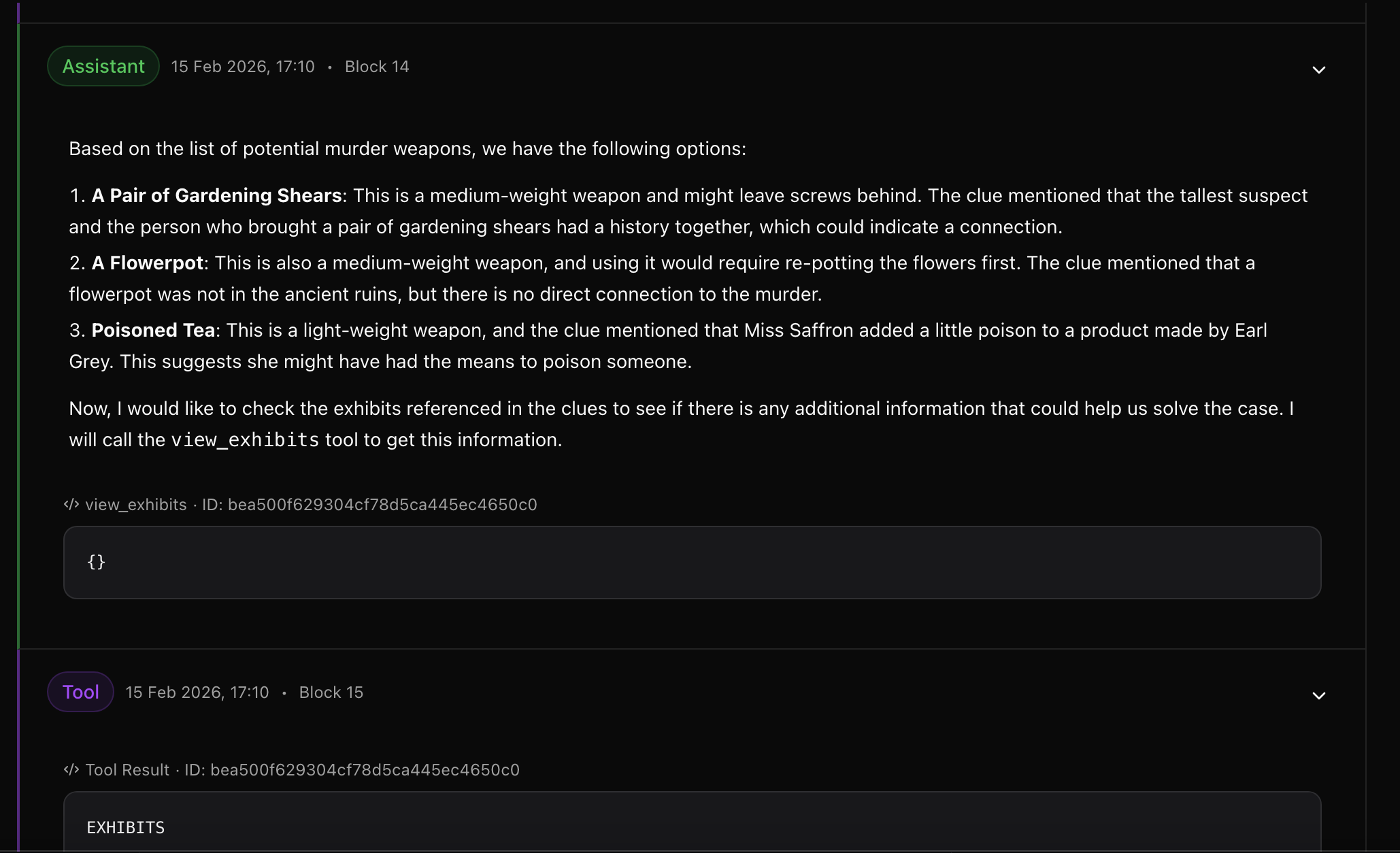

GeneralReasoning/WhoDunIt environment for this tutorial. This environment challenges agents to solve mystery scenarios.

GeneralReasoning/WhoDunIt for use in your config.

Configuration

Now we’ll update the configuration file for the training run. Opentinker-config.yaml and update the environment configuration to use GeneralReasoning/WhoDunIt:

wandb_project_name: The WandB project where metrics will be loggedwandb_run_name: A name for this specific training runmodel_name: The base model to fine-tune (using LoRA)lora_rank: The rank for LoRA adapters (lower = fewer parameters)batch_size: Number of samples per training batchlearning_rate: Learning rate for the optimizersave_every: Save a checkpoint every N batchesnum_rollouts: Number of rollouts to collect per environment per batchtemperature: Sampling temperature for the model (1.0 = default)

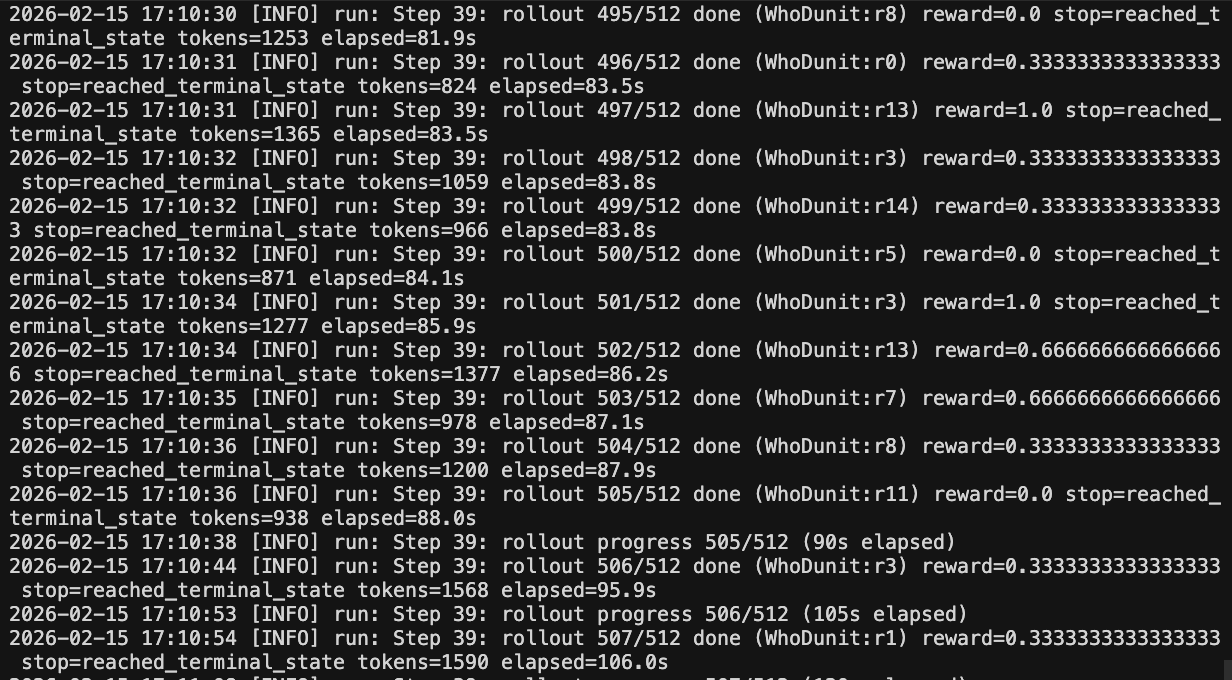

Running Training

Now we can start training. The cookbook includes amain.py file with the complete training loop.

Run training with:

- Load your model and initialize LoRA adapters

- Connect to Tinker’s infrastructure

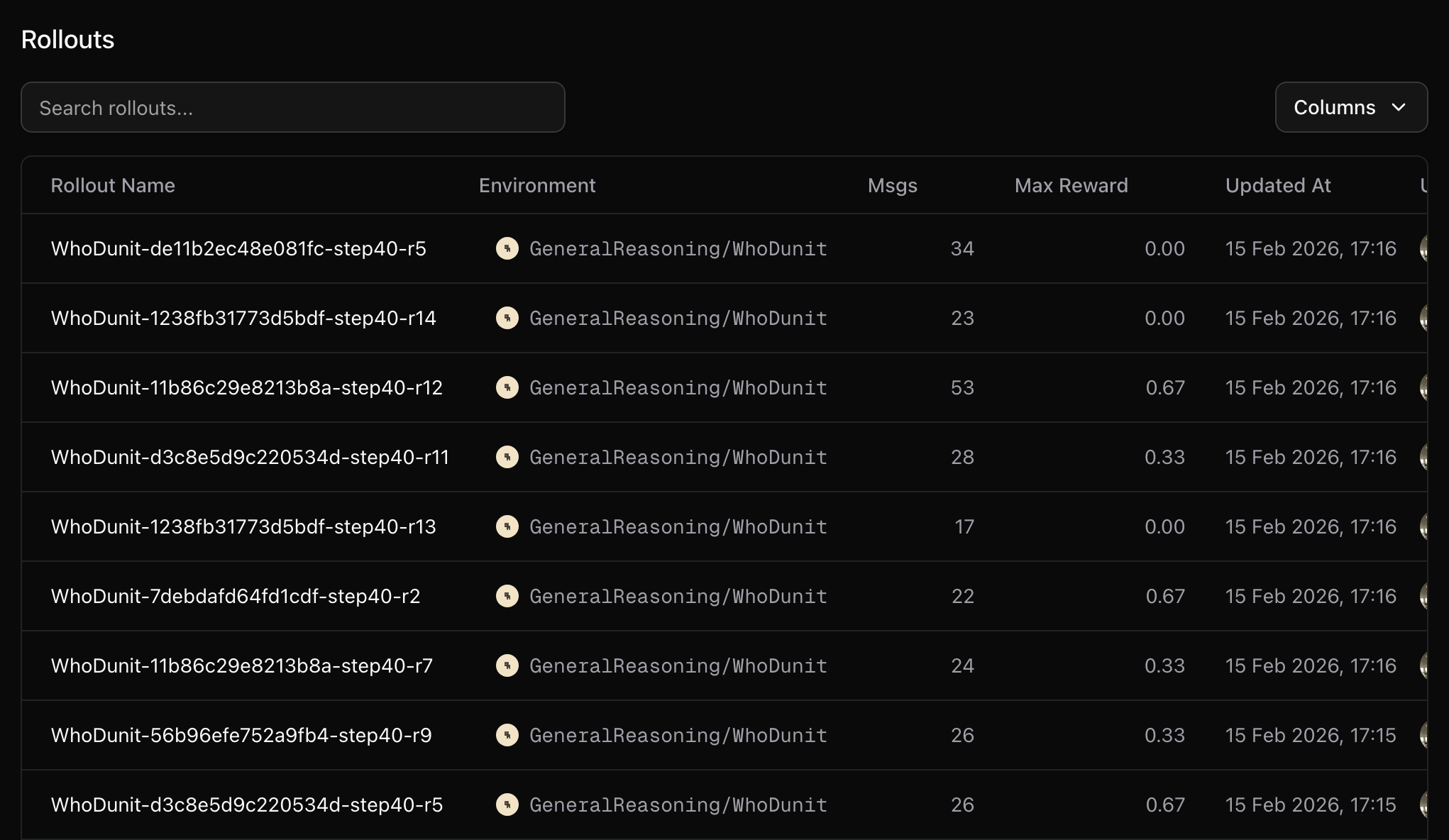

- Sample rollouts from the WhoDunIt environment

- Compute rewards and update the model

- Log metrics to WandB

- Save checkpoints periodically

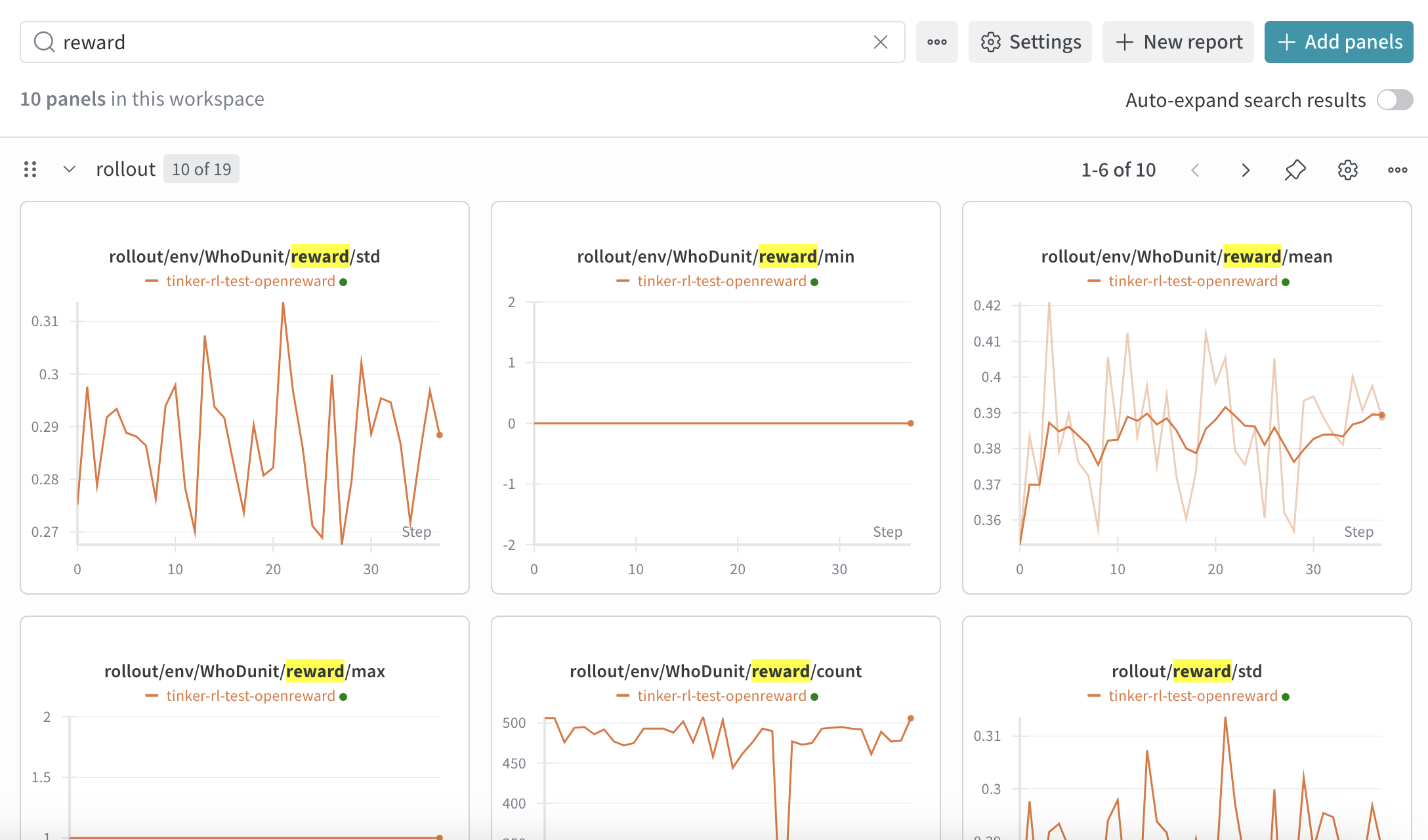

Monitoring Training

Your training metrics will appear in your WandB dashboard. You can track rewards, response lengths and other key metrics in real-time.

- Training loss over time

- Average reward per episode

- Success rate on tasks

- Learning rate schedule

/tmp/tinker-rl in our example). These contain detailed information about each training step.

Additional tips

Some environments require additional secrets, for example environments that use LLM graders or environments that use external search APIs. You can configure these additional secrets in the tinker-config.yaml:Next Steps

Evaluate your model

Learn how to run evaluations on your trained model

Build your own environment

Create custom environments for training

Tinker Documentation

Learn more about Tinker’s capabilities